Introduction

The government has raised concerns over the misuse of the Grok, an AI tool of social media platform X, to generate and circulate sexually explicit and derogatory content, particularly targeting women and children.

Ethical Issues arising out of AI use on Social Media

- Surveillance Tool: AI algorithms rely on massive data collection about users' behaviours, preferences, and personal traits.

- Social media companies often harvest detailed personal data under the guise of personalizing experience, creating a form of surveillance capitalism.

- Privacy Invasion: Users typically lack full awareness or informed consent about how their information is collected and exploited.

- Through this invasion of privacy, not only are users' online actions tracked, but AI can also infer sensitive traits (interests, beliefs, even moods) without permission.

- Misinformation: AI has greatly amplified the spread of misinformation, ranging from political disinformation campaigns (e.g. propaganda bots influencing elections) to conspiracy theories (like anti-vaccine content) etc.

- AI techniques like deepfake (synthetic image/video replacing original content) and voice skins/voice clones of public figures (manipulating audio and voice) are effective in spreading altered facts.

- Bias and Discrimination: AI systems can perpetuate biases present in their training data or design as algorithms can't differentiate stereotypes from bias.

- Such algorithmic bias can marginalize voices and entrench inequality.

- Psychological Impact: By design, algorithms are being optimized by AI for engagement, often promoting sensational or validating content that keeps people scrolling.

- This can foster addiction-like usage patterns and contribute to anxiety, depression, or distorted self-image – especially among younger users.

- Social Divide: Algorithmic personalization can create polarized communities (echo chambers), reducing exposure to diverse viewpoints and fragmenting the public sphere.

- Violation of Dignity: AI tools on social media can be misused to violate individual consent and dignity.

- The generation of non-consensual explicit and deepfake images/videos is effectively AI-assisted harassment.

Stakeholders and their interests

Stakeholders | Interests/ Responsibilities |

Social Media Platforms |

|

Users and Society |

|

Government and Regulators |

|

AI Developers |

|

Regulations on Ethical Use of AI on social media platforms

- Laws and Rules: The Information Technology (IT) Act, 2000 and the IT (Intermediary Guidelines and Digital Media Ethics Code) Rules, 2021 form the backbone of social media regulation.

- IT Act and Rules: Platforms are required under these rules to exercise due diligence for example, swiftly removing illegal or obscene content and having grievance redressal systems.

- Non-compliance can lead to loss of safe harbour protection under Section 79 of the IT Act, which currently shields social platforms from liability for user posts.

- Digital Personal Data Protection (DPDP) Act, 2023: It aims to ensure that data fiduciaries (including platforms which control the AI systems) comply with prior consent requirement, data protection measures, and adequate grievance redressal measures.

- Laws protecting Fundamental Rights: Laws such as Indecent Representation of Women (Prohibition) Act and the Protection of Children from Sexual Offences (POCSO) Act make it illegal to create or share obscene material, especially involving women or minors.

- IT Act and Rules: Platforms are required under these rules to exercise due diligence for example, swiftly removing illegal or obscene content and having grievance redressal systems.

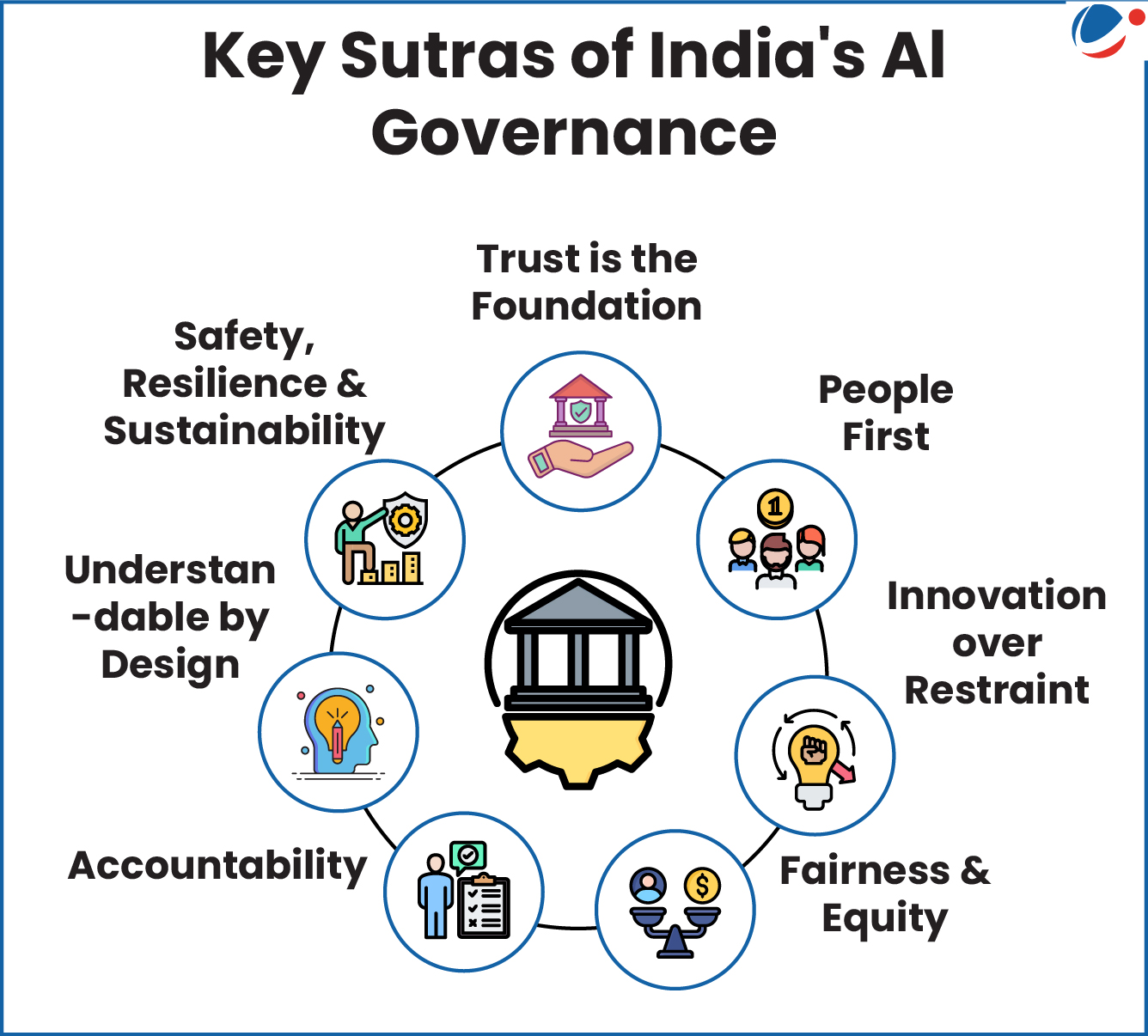

- AI-Specific Guidelines: Ministry of Electronics and Information Technology (MeitY) issued India AI Governance Guidelines for enabling safe and trusted AI innovation.

- It presented seven sutras for ethical AI governance (see infographic).

- Global Regulations: The European Union has taken a lead with the landmark EU Artificial Intelligence Act, agreed upon in 2024.

- This Act is the world's first comprehensive AI law, and it categorizes AI systems by risk, prohibits unacceptable risk AI practices, such as AI that involves subliminal manipulation of users or exploitative algorithms that seriously distort human behavior.

- Ethical AI Principles: Globally, there's movement toward ethical AI principles endorsed by bodies like the OECD and UNESCO, which, while non-binding, pressure companies to uphold values like fairness, transparency, privacy, and human oversight in AI systems.

- Also, global platforms like UNESCO's Global AI Ethics and Governance Observatory provide a global resource to find solutions to the most pressing challenges posed by AI.

Conclusion

AI's role in social media is a double-edged sword as it offers exciting possibilities to enrich our digital interactions, yet it also poses significant ethical questions. Social media platforms should integrate strong AI safeguards, such as effective content filters and clear verification of AI-generated content. Developers must follow ethics-by-design principles, using diverse data, reducing bias, and protecting privacy. Governments should strengthen regulations, mandate labelling and audits, and penalize misuse, while international cooperation ensures common standards.

Check Your Ethical AptitudeYou are posted as Joint Secretary in the Government of India. Over the last 48 hours, your office receives a surge of complaints from parents of school-going children and women professionals. The complaints state that AI-generated sexually explicit images are being created through prompts on a popular social media platform's integrated AI feature, and then circulated in public threads and group chats. Several victims report that their publicly available photographs have been altered into non-consensual sexualised visuals. A few complaints involve minors. The platform's India compliance team claims that it is an "intermediary" and cannot pre-screen everything, and strong takedowns and restrictions will reduce user engagement and harm business. Meanwhile, women's groups and the media demand immediate action, while a digital rights collective warns against knee-jerk censorship and insists on transparency and due process in takedown decisions. You have 72 hours to submit a reasoned, legally sustainable, and ethically defensible response plan that prevents further harm, supports victims, and preserves democratic values and trust in online governance. Against this backdrop, answer the following questions:(a) Identify the key stakeholders and the ethical issues involved. (b) What options are available to you as a public authority in the immediate term? Critically evaluate them. (c) Recommend a course of action, with ethical justification and a short-term implementation plan. |